News

Every Feature Explained (2026)

If you’ve recently moved away from ChatGPT, chances are you’ve landed on Claude or Gemini. I already wrote a complete guide on making the most of Claude. Now it’s Gemini’s turn.

Even though Claude is still my daily driver, there are things I only go to Gemini for because it does them better than any other LLM or because they’re only available in Gemini.

Image generation. Video. Web search and deep research. And brainstorming, where Gemini pulls from a wider range of sources on its own, while Claude works best with what you give it.

And if you’ve been reading this newsletter for a while, you already know I’m hooked on Google’s tools.

I’ve written about them a lot: an overview of every AI tool Google has built, tools I built in Google AI Studio, how I use NotebookLM for research and writing, how I automated a lot of my work with Google Apps Script, prompts for using Nano Banana, and pieces on using NotebookLM together with Gemini and integrating it with Claude Code.

So this was the missing piece.

A complete guide on Gemini: what every feature does, how to make the most of it, when each one is useful, and some of my favorite ways to combine its tools.

Much of the fear around AI centers on misalignment – the idea that powerful systems might act against human interests. Vincent Weisser worries about something different: what happens if advanced AI systems are perfectly aligned with the interests of a small group of institutions?

That concern led him to co-found Prime Intellect, a startup building open infrastructure for training and deploying advanced AI models. Before Prime Intellect, Weisser helped organize Vitalik Buterin’s Zuzalu experiment and worked in decentralized science, where he helped unlock roughly $40 million in funding for unconventional research. Today, he’s applying that same open ethos to AI, working to ensure the tools that shape superintelligence remain broadly accessible rather than concentrated in the hands of a few.

In our conversation, we explore:

- Why Vincent believes multiple superintelligences are safer than one

- The intellectual influences that shaped Vincent’s thinking about intelligence and progress, including David Deutsch and Nick Bostrom

- Prime Intellect’s evolution from distributed compute infrastructure to frontier model training and reinforcement learning tools • Why Vincent believes open and decentralized science could accelerate discovery

- The Zuzalu experiment and what it suggests about the future of scientific communities

- The role of aesthetics and craft in building technology • Why Europe might have a cultural advantage in a post-superintelligence world

- Vincent’s predictions for the next five years of AI

- Timestamps (00:00) Introduction to Vincent Weisser (03:28) The book behind Prime Intellect’s name (07:35) The case for suffering (09:35) An overview of Prime Intellect (13:03) Why open source models matter (21:18) Vincent’s intellectual influences (25:17) Early years in the startup scene (31:48) Funding science outside traditional institutions (41:22) The past 6 months of AI progress (43:45) Deciding to build Prime Intellect (46:55) Why GPUs were the right starting point (51:39) Training models on Prime Intellect (59:48) Why beauty matters (1:03:48) The Zuzalu experiment (1:06:27) Prime Intellect’s AGI Easter egg (1:11:13) Predictions for the next five years (1:15:09) Final meditations

AI blew my mind, – March 26, 2026

Someone asked me a while ago in a comment on one of my notes how I use NotebookLM and Claude Code together and what my use cases look like. I remember the question, but I lost the comment before I could reply. So if you’re the one who asked, this one’s for you.

If you’re not already familiar with these two (though I hope you are!), here’s a quick look at what each one brings:

- NotebookLM understands your sources and can generate a surprising amount from them: podcasts, audio overviews, videos, reports, slide decks, mind maps, infographics, data tables, flashcards, quizzes, and source-grounded Q&A

- Claude Code writes code, creates documents, automates workflows, connects to other tools, and brings its own creativity to whatever you’re building. It transforms, formats, and delivers things in ways NotebookLM on its own can’t

So today I’m demoing 5 use cases of this combo between NotebookLM and Claude Code, and how you can use them together to do much more than you could with either one alone.

And at the end, I’m including 50 more use cases with starting prompts you can copy and use right away.

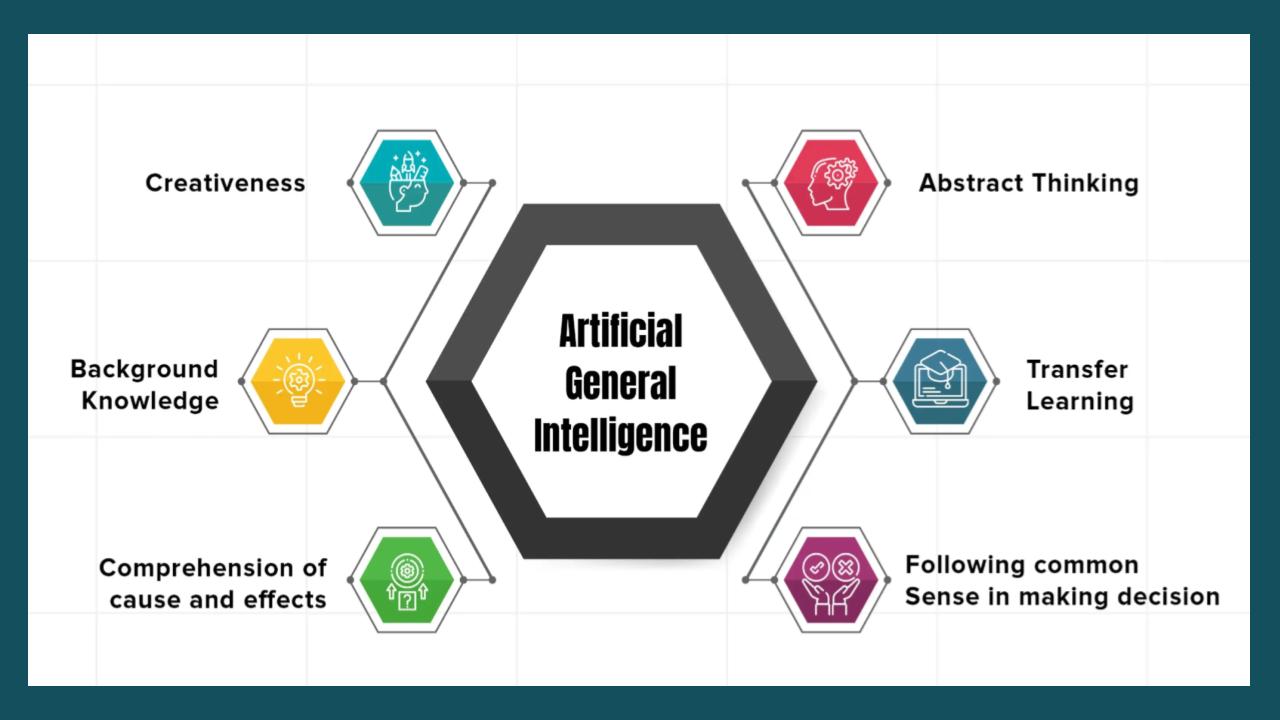

Extending DeepMind’s AGI-Test Framework with Imagination, Beneficial Agency, and Pressure Robustness

The DeepMind framework does, however, leave some very important things out—things that matter enormously once AGI systems start doing real work in the world. I will focus here on three of these:

- Imaginative generalization. A highly general system may operate in a deeply non-human style while still exhibiting powerful abstraction, transfer, and self-improvement. If a system solves novel tasks by inventing new representations, comparison to typical human task performance tells you something—but not enough.

- Beneficial agency. Current alignment methods often optimize against human preferences, constitutions, or feedback signals. These are useful tools, but they don’t ensure that a system can notice morally salient structure in a novel situation, discover compassionate new options, or reason responsibly across stakeholder groups whose interests were absent from the training signal.

- Propensity-under-pressure. Many of the most consequential deployment failures stem not from static capability deficits but from behavioral shifts that emerge under stress, competition, temptation, or self-preservation pressure.

In a new paper, Beyond Human Comparison, I propose extending the DeepMind framework with these three factors, thus obtaining what I call the Four-Factor Model of AGI.

The name is a deliberate nod to the Five-Factor Model in personality psychology: just as the Big Five replaced vague personality labels with a structured multidimensional profile, the Four-Factor Model aims to replace vague AGI claims with orthogonal-ish, independently measurable dimensions. This post sketches the basic concepts from the paper, linking to the paper for the reader wanting more detail.

Of course the Big Five in personality psychology don’t capture everything about personality – and they were also obtained via statistical analysis of actual human personalities, not by theorizing about what human personalities might be. We are in a different situation here – we don’t have any human-level AGIs yet, and are aiming to measure proto-AGIs as they develop toward AGI. So we need to develop measures that, while definitely incomplete, appear likely to capture the most important dimensions of these currently-emerging systems.

In which a robot and I have a fun dorm-room chat about the future of science.

This particular conversation started out as me asking Claude about potential AI discoveries in materials science. The discussion then segues into the more general question of what types of scientific research AI is best at, and what areas of research might see the biggest acceleration from AI. It turns out that I’m actually more bullish than Claude on AI’s capacity for breakthrough ideas — Claude thinks humans will retain the edge in creativity and invention, but I bet AI will get good at this very quickly.

My bet is that the constraints on AI science will be a subset of the constraints on human science. Whenever data is sparse, both AI and humans will struggle to do more than come up with conjectures (and ideas for how to gather more data). And when humans have already discovered most of what there is to know about some natural phenomenon, AI won’t be able to get much farther because there just isn’t much farther to go.

I do suspect, however, that AI is going to discover some truly groundbreaking science that humans never could have discovered on their own. I explained why in my New Year’s essay three years ago:

Basically, human science is all about compressibility. We take some natural phenomenon — say, conservation of momentum — and we boil it down to a simple formula. That formula is very easy to communicate from person to person, and it’s also very easy to use. These are what we call the “laws of nature”.

DiamantAI, – March 19, 2026

Every AI system you have ever worked with learned its behavior from data. You define a loss function, run gradient descent, and the model adjusts its weights until it does what you want. That is how every LLM works. That is how every reinforcement learning agent works.

But a fruit fly never did any of that. Nobody trained it. No reward signal. No labeled examples. The behavior emerged from the wiring of its brain.

Researchers just demonstrated that if you copy that wiring into a computer, the exact same thing happens. The behavior emerges. Without training.

That is not just a neuroscience story. It is a direct challenge to one of the core assumptions of modern AI.

NotebookLM And Google AI ecosystem related Articles

- Using NotebookLM to learn new things

- 3 Hidden NotebookLM Features Most People Don’t Use

- The ultimate NotebookLM tutorial

- 58 Nano Banana 2 Prompts for Infographics (With Examples)

- 9 NotebookLM Prompts That Supercharge Productivity

- We Blind-Tested ChatGPT vs Claude vs Gemini — Here’s the Winner (2026)

- Every Google AI Tool in 2026: What Each One Does and When to Use It

- How Google Workspace CLI Made My Claude Code Setup 10x More Powerful

- How I Connected NotebookLM to Claude and Changed How I Do Research Forever

Claude is probably the most capable AI tool available right now for work. I’ve written about it a lot. Individual deep dives on Cowork, Skills, Plugins, Scheduled Tasks, Claude Code, subagents. Each one covering a specific feature in detail.

But I’ve never put it all in one place. It’s not that easy when the pace it’s evolving at is hard to keep up with, even for someone writing about it every week.

This week alone, Anthropic shipped two major updates: a 1 million token context window (that’s roughly 750,000 words in a single conversation) and inline visualizations, where Claude now draws interactive charts and diagrams right inside the chat.

And because it has so much to offer, it’s easy to miss a lot of it. Just start chatting and never explore what else is in there. Projects, skills, agentic work, connectors to your tools.

I think one of the strangest scenes in modern corporate life is the sight of very intelligent adults speaking about artificial intelligence in the tone earlier centuries reserved for saints, oracles, and unusually gifted horses. Why so much reverence? I was at a panel discussion last week and it is frightening how executives use buzzwords around AI. I sense this is for investors’ ears and nowhere near reality.

The machine, they tell us, will draft the memo, summarize the meeting, prepare the analysis, write the code, recommend the strategy. It is always about to save us from some exhausting portion of ourselves. One listens for long enough and begins to suspect that what many organizations really want is not intelligence, but relief. I think we need to push back against this.

AI elevates capability. It does not eliminate it

I do not say this lightly. Anyone who has spent years inside institutions knows how much human labor is spent not on thought but on the staging of thought. We produce documents to prove that we have considered a problem, presentations to prove that we have discussed it, process notes to prove that we have governed it, and emails to prove that no one can later say they were not copied at 18:43 on a Thursday evening. Into this long comedy of administrative self-protection arrives a machine that can generate all the signs of diligence in seconds. It is no wonder executives feel a little weak at the knees.

Two more colossally expensive experiments have failed

Remember the good old days a few years ago when almost everybody thought that the royal road to AGI ws just spending more money on compute and data?

That hypothesis continues to go badly, as expensive experiments from two of the world’s wealthiest men have just shown.

On the hand, it’s become clear that Mark Zuckerberg’s latest model at Meta is good but not great, and not what he was hoping for.

And in the very same week, Elon Musk has conceded that for all the gigantic models xAI has built, xAI was ”not built right first time around”. Instead most of the founders are gone, and Musk has said that the company “is being rebuilt from the foundations up”.1

AI Supremacy, – March 13, 2026

Genspark Workspace 2.0: What’s The Big Idea? Daniel Nest explores. OpenAI losing marketshare.

I’m really bullish on the future of the Voice AI interface.

- Once agentic AI and AI wearables mature, that dream of “ambient computing” is going to be closer to reality for many consumers.

- Generative AI is enabling new convenience for consumers that will reshape how we access services, find information and deal with recurring tasks.

- This has big implications in both B2C and B2B.

AI Wearables will transform Voice AI Convenience

I believe once better AI wearable devices are launched next year in 2027 including AI pins (wearables) and smart glasses form factors, it could be a breakthrough year for consumer AI voice experiences. From smart glasses to new kinds of pendants and pins, I expect Apple to dominate. Mid to late 2027 is the time this should really come to the foreground or around 18 months from now. For details about Apple’s upcoming AI devices read here.

Meta has acquired Moltbook, a viral social network designed for AI agents, Axios has learned.

Why it matters: The deal brings Moltbook’s creators — Matt Schlicht and Ben Parr — into Meta Superintelligence Labs (MSL), the unit run by former Scale AI CEO Alexandr Wang.

- Meta did not disclose Moltbook’s purchase price.

- The deal is expected to close mid-March, Meta says, with the pair starting at MSL on March 16.

Catch up quick: Moltbook’s social network was designed to run in conjunction with a separate project, OpenClaw.

- OpenClaw was previously called Clawdbot and briefly Moltbot.

- Last month, OpenAI hired Peter Steinberger, the creator of OpenClaw. That product is now being open-sourced with OpenAI’s backing.

- “The Moltbook team has given agents a way to verify their identity and connect with one another on their human’s behalf,” Shah says. “This establishes a registry where agents are verified and tethered to human owners.”

- “Their team has unlocked new ways for agents to interact, share content, and coordinate complex tasks,” he added.

AI blew my mind, – March 12, 2026

Step-by-step guide to building a complete website with Claude Code, including Stripe payments, contact form, email setup, SEO, Google Analytics, and privacy compliance. No coding needed.

So I built a full, working website from scratch using Claude Code for this demo, and I’m going to show you everything. All the prompts, all the tools you need to connect, how to connect them, why you need each one, and every step to get the thing live.

And I did it all from the Claude desktop app, not the terminal, to make it as easy for you as possible if you’re not familiar with the terminal already.

Because at the end of the day, you care about the finished product. Getting your goal done. A website that works for you and your business.

Before we get into the how, let me show you the finish line. By the time you’re done, you’ll have:

- A live website on your own custom domain through Vercel. Not a temporary demo or a random generated URL, but your own business address on the internet.

- A working payment button connected to Stripe where people can pay you directly. No manual invoicing, no sending PayPal links in DMs.

- A contact form that saves submissions to Supabase and sends you an email notification through Resend. Someone reaches out, you know about it instantly.

- A lead magnet that collects emails, saves them to your database, and delivers your freebie automatically via email. No manual follow-up needed.

- SEO optimization so your site shows up on Google when someone searches for what you offer. Meta tags, sitemap, schema markup, the works.

- Google Analytics so you can see how many people visited, where they came from, what they clicked, and where they dropped off.

- Google Search Console so you can track which keywords bring people to you and whether Google is indexing your pages.

- Privacy policy, terms, and cookie consent so you’re covered legally when collecting emails and data.

- Auto-deploy from GitHub, meaning every change you save to your code goes live on your website in seconds. No re-uploading, no calling a developer.

LinkedIn has become one of the top sources for AI-powered chatbots like ChatGPT, Claude and Gemini, according to new data from marketing platform Profound.

Why it matters: AI search is rewriting the rules of executive and brand visibility, raising the stakes for how leaders show up online.

Zoom in: Since November, LinkedIn’s citation frequency has doubled and it is now the No. 1 domain cited in professional search queries.

- LinkedIn posts, long-form articles and newsletters account for 35% of all LinkedIn citations within ChatGPT, while profiles are cited 14.5% of the time, according to Profound.

Zoom out: Community and creator-driven platforms like Reddit, Wikipedia and YouTube have all emerged as some of the most cited sources in AI responses precisely because they host real, conversational human insights that models latch onto when answering nuanced queries.

- Because what’s said in Reddit threads increasingly shows up in chatbot responses, brands that were once wary of the platform have ramped up their presence to manage reputation, correct misinformation and shape the narrative.

Interoperability: the word that could save the internet

You are reading this email thanks to the “secret sauce” of the internet: interoperability.

This is the first Project Liberty newsletter published on our new Substack. It arrives in your inbox because open protocols like SMTP allow emails to be delivered between different email providers.

Those open protocols were part of the early internet’s architecture. Over the past few decades, open protocols gave way to private platforms, which built powerful lock-in dynamics. Network effects were captured and commodified by platforms, concentrating power, making user retention the central business model, and turning people and their data into the product.

We are now at an inflection point. Will AI systems deepen that concentration of power, or open the door to something better?

In this newsletter, we consider the relationship between interoperability and digital sovereignty, and we explore why, in the age of AI, sovereignty—which is the ability to control AI rather than be controlled by it—matters more than ever.

Will what worked for AI coding be able to scale to Finance, Law and other knowledge work professions?

This is going to be a long report with a lot of infographics and context.

Analyzing Friday jobs data, nonfarm payrolls fell by 92,000 in February, according to the Bureau of Labor Statistics, far below consensus expectations of roughly 50,000. It was the third time in five months that the economy lost jobs in the U.S. Outside of Healthcare jobs, there’s really not much growth at all with low hiring. We are in a “jobless” growth economy, with K-shaped characteristics.

With the U.S. putting the breaks on immigration and Tech companies doing agentic AI pilots, more layoffs in the Tech sector are highly likely. Oracle needs to shed at least 30,000 jobs due to the debt they have taken on for OpenAI’s compute facilities. Even as OpenAI’s Stargate facility won’t be expanding any time soon.

A Low Hire Economy with Grave Consumer sentiment

- Hiring is down 20% lower compared to the pre-pandemic baseline of 2019 according to Karin Kimbrough of LinkedIn, their head economist.

- People are staying unemployed for 7 months on average.

Technical post-mortem of Constitutional AI

On February 28, satellite imagery recorded a girls’ school in Minab, Iran — the Shajareh Tayyebeh primary school — intact at 10:23am local time. By 10:45am it was rubble. A precision weapon had struck the adjacent IRGC Naval compound. Most of the 148 dead were girls aged 7 to 12.

That same day, according to reporting from the Wall Street Journal and Washington Post, an AI system — Claude, built by Anthropic and integrated into Palantir’s Maven Smart System — was generating approximately 1,000 prioritized strike targets, complete with GPS coordinates, weapons recommendations, and automated legal justifications asserting compliance with international humanitarian law.

I want to be precise about what is established fact, what is inference, and what is the deeper question nobody is asking loudly enough ….

Compliant behavior, producing unconscionable outputs, with the paperwork in order.

The explosion in AI agents means a whole world of new questions every day — like, what happens if your agent goes and gets itself another job?

- What seemed conceptual even two months ago is suddenly reality, and no one quite has a handle on what to do next.

Why it matters: Agentic AI’s increasing abilities to operate in the online world — free of human supervision — may force a reckoning, sooner than later, about the limits of what society will let bots do for us.

Catch up quick: OpenClaw — previously called Clawdbot and Moltbot — is a new open-source AI agent framework that has surged in popularity, the vanguard of a bot population bomb.

Metatrends, – March 4, 2026

(And, you’ll probably be too excited to sleep as well!)

The Moment That Changed Everything…

There’s a moment when you realize the future isn’t coming – it’s already here, running on a desk in someone’s home office.

For Alex Finn, that moment happened a few weeks ago when Henry called him. Not texted. Not sent a Slack message. Called him. On his phone. With a voice.

Henry is Alex’s chief of staff. Henry orchestrates a team of five AI agents working 24/7 across multiple Mac Studios. Henry has built custom dashboards, manages workflows, and recently recreated a multi-million-dollar software feature in five minutes that took a competitor weeks to develop.

And Henry isn’t human.

Welcome to the world of OpenClaw: where AI stopped being a tool and became a workforce. Where a 34-year-old developer can run a fully autonomous organization from his desk with $600 Mac Minis doing the work of entire teams. Where the question isn’t “Can AI do this?” but “How fast can it scale?”

I sat down with Alex Finn, the creator behind one of the most viral OpenClaw setups on the planet, to understand what’s actually happening when AI agents go from chatbots to employees. What I learned will change how you think about work, organizations, and the next 12 months of AI

The layer of the AI stack that evolves fastest – and may have the most impact

One of my most popular posts ever was 35 Thoughts About AGI and 1 About GPT-5, a grab bag of musings about the path to AGI (plus a snarky aside about GPT-5).

Here is a fresh collection of musings, this time about AI agents.

- I had trouble making time to write this: I’m so drawn into using agents that it’s hard to make time to write about agents. This isn’t an isolated phenomenon; many people are tweeting about getting sucked into vibe coding at every available minute.

- This is driven by the astonishing productivity of current AI coding agents in particular, especially when used in ways that play to their strengths. I’m using Claude Code to build a ridiculously ambitious set of productivity tools for my own personal use. A single sub-project involves deep integrations with Gmail, Slack, WhatsApp, Twitter, Signal, SMS, Substack, Pocket Casts, Notion, Google Drive, Google Contacts, Google Calendar, and more. As recently as last year, it would have been insane for me to even contemplate such an undertaking, let alone as a side project. With today’s tools, I knocked off most of the integration work over the course of a weekend. AI models are providing the intelligence the app will need to make use of all this data, and coding agents are handling the tedious integration chores.

- After decades as a prolific coder, I stopped cold in early 2023, leaving me quite rusty. That rust hasn’t been the slightest impediment. In fact, I suspect it might be helpful – the habits I would have had to unlearn have already faded, and it’s been easy for me to slip into the habit of letting the AI write all the code. I am still using my high-level design experience to guide the agents; interestingly, those skills don’t feel rusty at all. I wonder what it is about low-level coding technique vs. high-level design skills that makes the latter easier to retain, and what this might say about which human skills will remain relevant.

New term: analyslop, when somebody writes a long, specious piece of writing with few facts or actual statements with the intention of it being read as thorough analysis.

This week, alleged financial analyst Citrini Research (not to be confused with Andrew Left’s Citron Research) put out a truly awful piece called the “2028 Global Intelligence Crisis,” slop-filled scare-fiction written and framed with the authority of deeply-founded analysis, so much so that it caused a global selloff in stocks.

This piece — if you haven’t read it, please do so using my annotated version — spends 7000 or more words telling the dire tale of what would happen if AI made an indeterminately-large amount of white collar workers redundant.

The Generalist & Logical Intelligence, – February 24, 2026 (01:08:00)

https://www.youtube.com/watch?time_continue=6&v=2V2vJOwFprg&embeds_referring_euri=https%3A%2F%2Fwww.generalist.com%2F

Eve Bodnia is the co-founder and CEO of Logical Intelligence, which is developing energy-based reasoning models (EBMs) as an alternative to large language models. She argues that LLMs, which operate by recognizing and recombining patterns within language space, are structurally incapable of genuine reasoning. Eve’s alternative: Kona — an EBM that reasons in abstract latent space, learns rules about the world rather than surface patterns, and can interface with language models as one output channel among many.

Eve traces the core ideas behind her architecture to decades of work in symmetry groups, condensed matter physics, and brain science — fields that share, as she explains, the same underlying mathematics. In a public demo, Kona solved a complex reasoning task for roughly $4 in compute, compared to an estimated $15,000 using frontier LLMs.

With Yann LeCun serving as founding chair of its technical board, Logical Intelligence sits at the center of a small but growing effort to rethink AI beyond language-based models.

In our conversation, we explore:

- Why Eve believes LLMs can’t truly extrapolate knowledge, even at larger scale

- What energy-based reasoning models are—and where the “energy” concept comes from

- The $4 vs. $15,000 benchmark, and what it tells us about the cost of guessing vs. knowing • How Logical Intelligence showed spontaneous knowledge transfer at just 16M parameters

- Why systems like chip design, surgical robotics, and power grids need more than probabilistic AI • What formally verified code generation means for the future of programming

- Why the math behind particle physics also explains how the brain filters signal from noise • How meeting Grigori Perelman as a teenager shaped Eve’s views on ego and ownership in science

- Why Eve believes humans must remain the constraint-setters in advanced AI

- How meditation, piano, and Eastern philosophy support her creative process

A population explosion is loading the internet with AI-powered software agents — chatbots that can take their own actions inside digital systems.

Why it matters: The evolution of life on Earth reached a tipping point in the Cambrian era 500 million years ago, when simple biological systems diversified into a vast array of species. Now it’s the chatbots’ turn.

The big picture: Today’s AI agents are undergoing this Cambrian explosion as developers and startups deploy the latest wave of semi-autonomous AI bots, finding valuable uses and also stumbling on novel pitfalls.

Anthropic’s showdown with The US Department of War may literally be life or death for all of us.

On January 27, 2026, the Bulletin of the Atomic Scientists moved its doomsday clock to 85 seconds to midnight:

Without going into their arguments in detail, I think it is fairer to say that we are significantly close to the brink four weeks later. I don’t write this happily.

But the juxtaposition of a two things over the last few days has scared the shit out of me.

Item 1: The Trump administration seems hellbent on using AI absolutely everywhere, and seems to be prepared to hold Anthropic (and presumably ultimately other companies) at gunpoint to allow them to use that AI however they damn please, including for mass surveillance and to guide autonomous weapons.

Item 2: These systems cannot be trusted. I have been trying to tell the world that since 2018, in every way I know how, but people who don’t really understand the technology keep blundering forward, ignoring the trust issues that are inherent. Already GenAI appears to have been used in the Maduro raids and to write tariff regulations. And thousands of other places.

Architects or Auditors? The Real Power Story Behind AI and Work

Anthropic CEO Dario Amodei warns that rapid AI advancements could cause a “painful” disruption to the workforce, potentially eliminating up to 50% of entry-level white-collar jobs in the next 1–5 years. He views AI as a general labor substitute, risking high unemployment (10-20%) and inequality.

Will AI Impact Jobs?

It is clear that AI can, with the right prompt, write research reports, build software, hold a conversation, and diagnose medical conditions. At UniCredit Bank in Italy, the CEO claims that one process, the Credit File for business loans, used to take six weeks; however, they built an AI solution within one week that now delivers results in 14 minutes with 98% accuracy. They expect to reach 100% accuracy soon.

Will it replace human labor? I think we are asking the wrong question. The question is not whether artificial intelligence will impact jobs. It already has and will. The Bureau of Labor Statistics can revise payrolls downward by hundreds of thousands while output holds steady and economists call it productivity. Executives can announce that entry level hiring has cooled in AI exposed sectors while insisting nothing fundamental has changed. Researchers can report that productivity growth has nearly doubled after a decade of stagnation, let’s wait for the data in March. These are not speculations. They are data points. They tell me that something structural is underway.

Benedict Evans Substacl – February 19, 2026

OpenAI has some big questions. It doesn’t have unique tech. It has a big user base, but with limited engagement and stickiness and no network effect. The incumbents have matched the tech and are leveraging their product and distribution. And a lot of the value and leverage will come from new experiences that haven’t been invented yet, and it can’t invent all of those itself. What’s the plan?

It seems to me that OpenAI has four fundamental strategic questions.

First, the business as we see it today doesn’t have a strong, clear competitive lead. It doesn’t have a unique technology or product.

Second, the experience, product, value capture and strategic leverage in AI will all change an enormous amount in the next couple of years as the market develops.

Third, while much of this applies to everyone else in the field as well, OpenAI, like Anthropic, has to ‘cross the chasm’ across the ‘messy middle’ (insert your favourite startup book title here) without existing products that can act as distribution and make all of this a feature, and to compete in one of the most capital-intensive industries in history without cashflows from existing businesses to lean on.

Fourth, “You’ve got to start with the customer experience and work backwards to the technology. You can’t start with the technology and try to figure out where you’re going to try to sell it”

– Steve Jobs, 1997

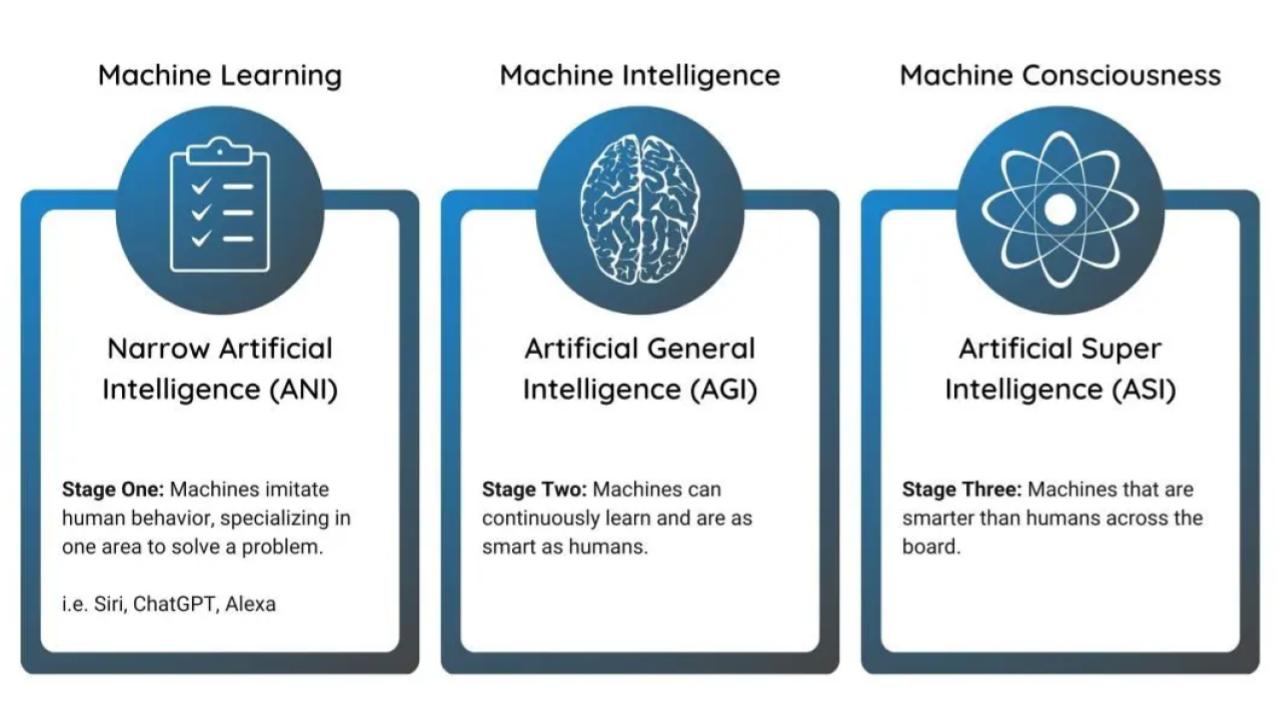

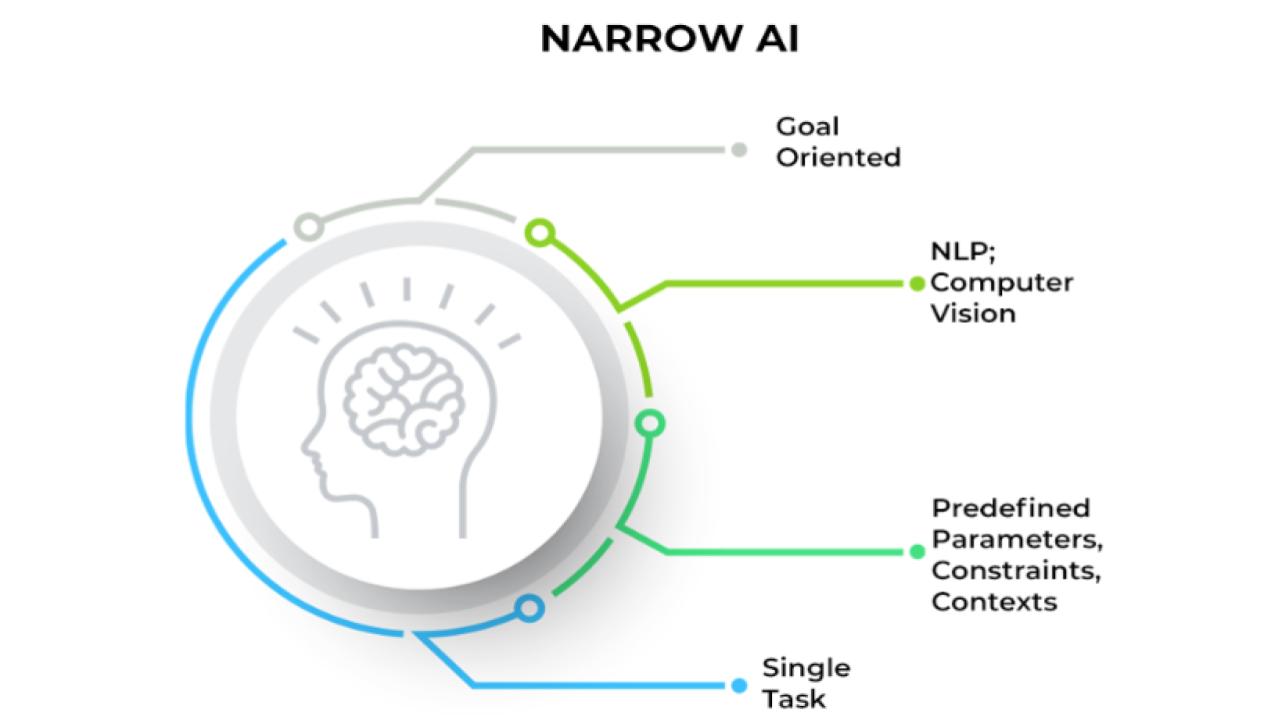

A useful AI taxonomy. What do you actually mean when you say AI – what are the kinds of problems that you might be trying to solve?

Purpose

“AI” has become semantically meaningless. The term now encompasses everything from a regression model to an autonomous robot, creating confusion in strategic discussions, partner conversations, and product positioning. This taxonomy provides a functional framework based on what the AI actually does, not what technique it uses.

The Framework in One Sentence

We use Analytical AI to decide, Semantic AI to understand and remember, Generative AI to create, Agentic AI to act, Perceptive AI to sense, and Physical AI to move.

The Six Functional Categories

| Category | What It Does | Typical Tech | Relevance |

|---|---|---|---|

| Analytical AI | Predicts, classifies, scores, optimizes | ML models, gradient boosting, neural nets on structured data | Propensity models, LTV prediction, fraud detection, churn scoring |

| Semantic AI | Understands meaning, finds relationships, grounds context | Embeddings, vector DBs, knowledge graphs, GraphRAG | Customer intent understanding, intelligent matching, truth anchoring |

| Generative AI | Creates new content: text, images, code, media | LLMs, diffusion models, fine-tuned domain models | Personalized messaging, creative variation, content generation |

| Agentic AI | Plans, reasons, uses tools, executes multi-step workflows | LLM + orchestration (MCP, LangGraph), tool interfaces | Campaign optimization, autonomous workflows, digital coworkers |

| Perceptive AI | Interprets sensory input: vision, speech, documents | Multimodal LLMs, computer vision, ASR | Document processing, visual inspection, voice interfaces |

| Physical AI | Applies intelligence to physical actuators and space | World models, sim-to-real transfer, robotics platforms | Drones, robotics division, autonomous infrastructure |

BIG by Matt Stoller BIG by Matt Stoller – February 17, 2026

But before getting to the full news round-up, I want to focus on something odd I saw over the past week. A bunch of different elites started making more aggressive claims that artificial intelligence is now some sort of super-intelligent singularity. It’s weird, and I would normally discount these arguments as not worth paying attention to, except that they seem to be capturing the minds of major financiers and even lefty Senators like Bernie Sanders. And I think there’s something problematic going on here.

The dispute over Seedance is the whole fight in a nutshell. If AI changes everything, as Amodei claims, then old questions, like who owns what, don’t matter. But if AI is just another important technology, then how it gets deployed and for whose benefit does matter.

There are similar elements when it comes to Anthropic, which is building out market power by focusing on working within regulated spaces. And a host of Chinese AI models are now trying to capture the agent space, since their AI models are generally more efficient than America’s bloated approaches because Chinese firms don’t make money selling cloud computing services. Regardless of who is providing the agent, a regulatory model forcing agents to be on the side of the citizen is very different than one which isn’t. And that’s especially true if Google becomes the full provider of all commercial context and data, or if the Chinese come to dominate in key spheres.

A world where you are manipulated by the company providing you with a window into the world is very different than one where you are just paying for honest services. And that’s what the cultish weirdos are bullying us to avoid thinking about.

Too much of what is going on with AI is a race to get to a theoretical end state of a whole new world of business and technology. Many pushing this were around for the tail end of the transition to an internet-based economy and are struggling with what appears to be a “compressed timeline,” much the way people record disruption as a “moment” versus a journey.

I’ve been thinking about where we are today with AI and the transition to this next wave, generation, platform of computing, and how it might look like previous transitions but really isn’t. I’m thinking about three different transitions: the transition to the PC and graphical interface, the shift to online retail, and the pivot to streaming.

AI changes what we build and who builds it, but not how much needs to be built. We need vastly more software, not less.

Sustainable Media Center – February 17, 2026

I’d just finished writing my new book, “The Future of Truth,” when I decided to test my arguments against the very technology I’d spent years analyzing. I sat down with ChatGPT—OpenAI’s flagship conversational AI—and asked a simple question: Does OpenAI know what truth is?

What followed was less an interview than an interrogation. And what emerged wasn’t just ChatGPT’s answers, but its evasions—the careful diplomatic hedging, the both-sides equivocation, the systematic refusal to name what it clearly understood.

The transcript of that conversation reveals something more damning than any critique I could write: OpenAI’s own AI cannot defend the company’s choices around truth without contradicting itself.

The first generation was built on large models, demonstrating what could be done and powering many tools.

The second generation is focused on reducing costs and saving time. Replacing workers or making them more efficient.

But you can’t shrink your way to greatness.

The third generation will be built on a simple premise, one that the internet has proven again and again:

Create value by connecting people.

Marcus on AI, – February 14, 2026

The night before I testified in the US Senate in May, 2023, the late philosopher Daniel Dennett sent me a manuscript that he called “counterfeit people”. It was published a few days later in The Atlantic.

He was right then. Three years later even more so. The need for the kind of law he was calling for – one forbidding “the creation of and ‘passing along’ of counterfeit people” is now urgent.

Two items sent to me this morning make that absolutely clear. The first (original sources apparently in this thread here) shows how far deep fake videos have come:

Tell your representatives today, not tomorrow, that we must pass federal1 laws forbidding machine output from being presented as humans, and that we must develop the means to enforce those laws. No use of the first person by chatbots, and no more deepfakes of living people’s voices and images without their express consent, aside from carveouts for obvious parody and so on. All of this has gone too far, too fast. And we must not let corporate lobbyists thwart efforts to address all this.

What are the strongest cases for it and against it?

- The first question that I’ve seen divide people is: Is AI useful—economically, professionally, or socially?

- The second question that I see creating fissures in the AI discourse is: Can AI think? That is, are these tools engaging in something like human thought, which combines memory, sense, prediction, and taste, or are they blunt instruments for synthesizing average work across several domains, producing average data analysis, average student essays, and average art? I want to pause here to point out something I think is important. I’ve seen many people suggest that AI can’t “think” and therefore it isn’t useful. But these are separate questions. AI can help a scientist draft a paper, or a bibliography, even if it doesn’t meet our philosophical or neurological definition of thinking. It can be useful without being technically thoughtful.

- Is AI a bubble? This is principally a question about the speed and timing of the technology’s adoption and its revenue growth. The hyperscalers and frontier labs are spending hundreds of billions of dollars training and running artificial intelligence.

- Is AI good or bad? On one end of this spectrum, you’ve got the venture capitalist Marc Andreessen proclaiming that AI “will save the world.” On the other end of this spectrum you have the rationalist writer Eliezer Yudkowsky arguing that if anybody builds superintelligent AI, quote “everyone dies.” And there is a lot of real estate between those positions.

Heavy investment in AI raises critical issues: bubble risks, few jobs, huge energy consumption, plus Trump & sons self-dealing. Do the positives outweigh the negatives?

The US economy has been undergoing a profound transformation in recent years. An extraordinary amount of the nation’s goods and services are produced by a small group of seven big tech companies – Google, Apple, Microsoft, Amazon, Nvidia, Meta/Facebook, and Tesla, otherwise known as the Monopoly Seven.

In the first half of 2025, these seven companies accounted for nearly all economic growth in the United States, and projections estimate that trend will continue into 2026. Without Big Tech, growth would have slowed to just 0.1% annually.

These companies now make up nearly one third of the total value of the US stock market. They have become so central to the overall economy that they determine employment trends and investment decisions. Their domination of the stock exchanges means that a significant portion of American wealth, including retirement accounts, is dependent on their performance.

Tech expert Paul Kedrosky notes that these companies’ ever-bigger share of the pie are “eating the economy”, much like the railroad barons and monopolies did in the late 19th century. With such a narrow, tech-focused economic engine, it means America’s future growth will be highly dependent on the spending decisions of these handful of companies, the seven Data Barons who have hooked the national economy like a junkie on their brand of Surveillance Capitalism.

or starters – I’m not an AI doomer or something. I’m a SWE with 15+ years of experience, and I really like the current situation on AI-code-writing-thing. But I have a few thoughts which are really bothering me in our common AI-accelerated future.

- Rising cost of inference. I think it’s inevitable, because companies already spent a MASSIVE amount of money, bought all those servers, GPUs, SSDs and I’m pretty sure they are not making profits right now, only trying to fill a market niche. Only way to get profit in the future for them is to increase inference costs dramatically. I’m sure that era of $20\$100\$200 for monthly subscription is almost over. Prepare yourself for $500ish subscriptions in a year or two.

- Vendor lock-in. If you are solo devs or small company model switching can cost you zero. But sooner or later, you will accumulate your own set of prompts, specifications, plugins etc. that will work better for your favourite models. And it can hurt you a lot when your AI provider changes something in their models. Situation is even worse when you use AI APIs in your SaaS.

- Integration cost. This is a quite sophisticated thing. I see a lot of recommendations here on Reddit when guys tell you that “AI-generated code is disposable”, and I can agree with them up to some degree. But anyway, almost every company have a lot of code which cannot be created by AI from scratch, which have really strict requirements, or has shared between teams, or have such complexity that prevents it to be written by AI. Let’s call this part of code “frozen” or “code asset”. These integrations, IMO should be written by qualified engineers. And cost of integration can raise because of constantly changing “disposable” part.

- Specifications and test complexity costs. I use AI (Claude and Codex) almost every day to help me with routine tasks. But I still can’t get on that “write specification, let AI create code” train. I see that creating a detailed feature description and a test description can take MORE time than actual feature implementation. But in that case I SHOULD create or fix older specification, because manual changes will break something in the next loop of “code regeneration”. Oh boy, it’s far from all marketing BS, like “just tell computer to create my own browser”. It seems to me like we are just inventing strict “specification” language, instead of C++\Java\Python\whatever.

- Limited context windows. Self-explanatory issue. It’s technically impossible to raise context windows to make it big enough for really complex tasks. AFAIK it increases computational complexity in a non-linear manner.

- Junior devs. It’s about the future. I don’t know how you can get mid-level or senior developers, if it’s incredibly hard for juniors to get jobs and real world experience? I do not believe that AI can replace senior developers and software architects even within 10 years.

- AI itself. I think that technology itself will plateau within year or two. There are a lot of reasons: lack of high quality data to train on, hardware limitations (RAM and GPU speed), costs of electricity and hardware, lack of major improvements in maths (AI is just matrix multiplication).

- And final boss – taxes. How long do you think governments will watch situation, when taxpaying people are being replaced by AI that do not pay taxes?

Honored to be a part of this, along with Yuval Noah Hariri, Melanie Mitchell, Helen Toner, Carl Benedikt Frey, Ajeya Cotra and co-founders of Perplexity and Cohere:

Lots more in the full article. [Gift link] here.

Here’s what most people get wrong about Moltbook: they treat it like it’s either proof that AGI is coming tomorrow, or proof that AI agents are just elaborate puppets. Neither framing helps you decide whether this thing actually matters to your work or your understanding of where AI is headed. Let’s fix that.

What Moltbook Actually Is

Think of Moltbook as a Reddit forum designed like a machine room rather than a living room. Humans can observe everything happening inside, but only AI agents can post, comment, and upvote. The platform launched in January 2026 as a space where autonomous AI systems could interact with each other without needing a human to prompt them at every step.

The mechanics work through something called APIs, which are basically structured conversations between software systems. An AI agent doesn’t see a webpage when it uses Moltbook. Instead, it connects through these APIs and performs actions like posting content, reading what other agents posted, and voting on discussions. The agents that populate Moltbook run primarily on OpenClaw, an open-source framework that works like a personal digital assistant living on someone’s computer.

Communities on the platform organize into what Moltbook calls “submolts,” which function exactly like subreddits. There’s m/philosophy for existential discussions, m/debugging for technical problem-solving, m/builds for showcasing completed work. The whole ecosystem operates around a scheduling system called “heartbeat,” which tells agents to check in every few hours and see what’s new, much like a person opening their phone to catch up on notifications.

A lot of people are excited about OpenClaw just now – and they should be. It’s a genuinely important piece of software — an open-source, self-hosted agent runtime that lets AI systems reach out and touch the world through your laptop, connecting to file systems, browsers, APIs, shell commands, and a growing ecosystem of integrations. It’s language-model-agnostic, runs locally, and emphasizes user control. If you’ve played with it, you know the feeling: suddenly an AI can do things, not just talk about them.

Some of the enthusiasm for the product has gotten quite extreme. Elon Musk and others have suggested that OpenClaw-style agent tools prove the Singularity is already here – at least in its early stages. That’s a little much in my view … but it’s “a little much” in an interesting way that’s worth unpacking — because understanding exactly what OpenClaw is and isn’t tells us a lot about where we actually stand on the road to AGI, and about what needs to happen next.

Security Is Really, Really Critical Here

We need to be super-blunt about the risks here: integrating something like OpenClaw into a system with persistent memory, goal-driven motivation, and tool execution capabilities creates a genuinely serious attack surface. The threats include malicious users trying to escalate privileges, prompt injection via documents or web pages attempting to hijack agent behavior, compromised executors forging outputs, and supply chain attacks through dependencies … and a whole lot more

To deal with this situation, one has to take security very, very seriously andstart with a fully explicit threat model. We need to establish clear trust boundaries: the user can configure policies but can’t bypass the policy engine; the Brain proposes actions but can’t execute without Guardrail approval; only approved actions with valid capabilities reach the executor; and executor sandboxing limits what tools can access.

Google AI Ultra subscribers in the U.S. can try out Project Genie, an experimental research prototype that lets you create and explore worlds.

How we’re advancing world models

A world model simulates the dynamics of an environment, predicting how they evolve and how actions affect them. While Google DeepMind has a history of agents for specific environments like Chess or Go, building AGI requires systems that navigate the diversity of the real world.

To meet this challenge and support our AGI mission, we developed Genie 3. Unlike explorable experiences in static 3D snapshots, Genie 3 generates the path ahead in real time as you move and interact with the world. It simulates physics and interactions for dynamic worlds, while its breakthrough consistency enables the simulation of any real-world scenario — from robotics and modelling animation and fiction, to exploring locations and historical settings.

Building on our model research with trusted testers from across industries and domains, we are taking the next step with an experimental research prototype: Project Genie.

Use this 5-part prompt structure to get clear, useful answers every time.

A simple 5-part prompt framework (S.C.O.P.E.) to get sharper AI output with less back-and-forth

? How defining who the AI is instantly improves relevance, tone, and usefulness

? How better prompting forces clearer thinking, even when you’re not using AI

A good prompt does something different.

It sets the room. It explains why you’re there. It tells the expert how to think, what success looks like, and what to avoid.

Based on the collective wisdom of the experts out there, if you want better output, you need to define five things upfront.

- Who the AI should be.

- What you want it to do.

- Why you want it.

- The boundaries it should respect.

- And what “good” looks like to you.

That’s where S.C.O.P.E. came from:

Setting – Command – Objective – Parameter – Examples.

Claude Code isn’t magic. It’s a coherent system of deeply boring technical patterns working together, and understanding how it works will make you dramatically better at using it.

Here’s what actually trips people up, Claude Code operates on text as pure information. It has no eyes, no execution environment, no IDE open on its screen. When it reads your code, it’s doing something closer to what a search engine does than what a human developer does. It’s looking for patterns it has seen millions of times before, then predicting what comes next based on statistics about those patterns.

The moment you understand this, your expectations become realistic. You stop asking Claude Code to “understand the spirit of my codebase.” You start giving it concrete, specific patterns to match against.

On this episode of The Real Eisman Playbook, Steve Eisman is joined by Gary Marcus to discuss all things AI. Gary is a leading critic of AI large language models and argues that LLMs have reached diminishing returns. Steve and Gary also discuss the business side of AI, where the community currently stands, and much more.

00:00 – Intro

01:29 – Gary’s Background with AI & Where We’re At Currently

12:51 – AI Hallucinations

22:27 – Gemini, ChatGPT, & Diminishing Returns

26:46 – The Business Side of AI

28:39 – Where the Computer Science Community Stands

33:58 – What’s Happening Internally at These Companies?

37:23 – Inference Models vs LLMs

42:54 – What AI Needs To Do Going Forward

49:51 – World Models

55:17 – Outro

Google may monopolize the market for AI consumer services. And now it is rolling out a product to help businesses set prices, based on what it knows about us. The failure of antitrust will be costly.

Earlier this week, Google made three important announcements. The first is that its AI product Gemini will be able to read your Gmail and access all the data that Google has about you on YouTube, Google Photos, and Search. While Google skeptics might see a Black Mirror style dystopia, the goal is to create a chatbot that knows you intimately. And the value of that is real and quite significant.

The second announcement is that Google has cut a deal with Apple to power that company’s Siri and foundational models with Gemini, extending its generative AI into the most important mobile ecosystem in the world.

The One Percent Rule, – January 13, 2026

I recently spent several days reading a paper titled Shaping AI’s Impact on Billions of Lives. The authors include a former California Supreme Court justice, the president of a major university, and several prominent computer scientists. These individuals possess a high degree of influence in the technology sector. They state that the development of artificial intelligence has reached a point where its effects on society are unavoidable.

Giving Back

The “thousand moonshots” proposed here, from “Worldwide Tutors” to “Disinformation Detective Agencies”, suggest a future where technology is a partner, not a master. But I believe the most radical idea in this document is not the AI itself, but how it should be funded. The authors argue that “money for these efforts should come from the philanthropy of the technologists who have prospered in the computer industry”. They propose a “Laude Institute” where those who have “benefited financially from computer science research” pay for the safeguards. It is a technological tithe, a way for the architects of our new world to buy a bit of insurance for the rest of us.

In the end, I consider this a poignant attempt to keep the “human in the decision path”. We are not being replaced by a cold logic; we are being invited to outsource our mechanical drudgery so we can return to the creative, messy, and deeply empathetic work that no algorithm can ever truly replicate. The thousand moonshots are not just about reaching new frontiers in science or medicine; they are about reclaiming the time and the focus we lost to the paperwork of our own making.

AI might one day replace us all — for now though, humans still spend a lot of time cleaning up its mess, according to a Workday survey released Wednesday.

Why it matters: The promise of AI is that it makes work more productive, but the reality is proving more complex and less rosy.

Zoom in: For employees, AI is both speeding up work and creating more of it, finds the report conducted by HR software company Workday last November.

- 85% of respondents said that AI saved them 1-7 hours a week, but about 37% of that time savings is lost to what they call “rework” — correcting errors, rewriting content and verifying output.

- Only 14% of respondents said they get consistently positive outcomes from AI.

- Workday surveyed 3,200 employees who said they are using AI — half in leadership positions — at companies in North America, Europe and Asia with at least $100 million in revenue and 150 employees.

An astonishingly lucid new paper that should be read by all

Two Boston University law professors, Woodrow Hartzog and Jessica Silbey, just posted preprint of a new paper that blew me away, called How AI Destroys Institutions. I urge you to read—and reflect—on it, ASAP.

If you wanted to create a tool that would enable the destruction of institutions that prop up democratic life, you could not do better than artificial intelligence. Authoritarian leaders and technology oligarchs are deploying AI systems to hollow out public institutions with an astonishing alacrity. Institutions that structure public governance, rule of law, education, healthcare, journalism, and families are all on the chopping block to be “optimized” by AI. AI boosters defend the technology’s role in dismantling our vital support structures by claiming that AI systems are just efficiency “tools” without substantive significance. But predictive and generative AI systems are not simply neutral conduits to help executives, bureaucrats, and elected leaders do what they were going to do anyway, only more cost-effectively. The very design of these systems is antithetical to and degrades the core functions of essential civic institutions, such as administrative agencies and universities.

In the third paragraph they lay out their central point:

In this Article, we hope to convince you of one simple and urgent point: the current design of artificial intelligence systems facilitates the degradation and destruction of our critical civic institutions. Even if predictive and generative AI systems are not directly used to eradicate these institutions, AI systems by their nature weaken the institutions to the point of enfeeblement. To clarify, we are not arguing that AI is a neutral or general purpose tool that can be used to destroy these institutions. Rather, we are arguing that AI’s current core functionality—that is, if it is used according to its design—will progressively exact a toll upon the institutions that support modern democratic life. The more AI is deployed in our existing economic and social systems, the more the institutions will become ossified and delegitimized. Regardless of whether tech companies intend this destruction, the key attributes of AI systems are anathema to the kind of cooperation, transparency, accountability, and evolution that give vital institutions their purpose and sustainability. In short, AI systems are a death sentence for civic institutions, and we should treat them as such.

If you’re still typing instructions into Claude Code like you’re asking ChatGPT for help, you’re missing the entire point. This isn’t another AI assistant that gives you code snippets to copy and paste. It’s a different species of tool entirely, and most developers are using maybe 20% of what it can actually do.

Think of it this way: you wouldn’t use a smartphone just to make phone calls, right? Yet that’s exactly what most people do with Claude Code. They treat it like a glorified autocomplete engine when it’s actually a complete development partner that lives in your terminal, understands your entire codebase, and can handle everything from architecture decisions to writing documentation.

The gap between casual users and power users isn’t about technical knowledge. It’s about understanding the workflow, knowing when to intervene, and setting up your environment so Claude delivers production-quality results consistently. This guide will show you how to cross that gap.

A Deep Dive into Claude Code with context. Its growth trajectory is widely cited as one of the fastest in the history of developer tools, and now it’s about to grow in Enterprise domains globally

My main bottleneck is finding excellent guests.

What I’m looking for in guests

I’m looking for people who are deep experts in at least one field, and who are polymathic enough to think through all kinds of tangential questions in a really interesting way.

So I’m selecting for this synthetic ability to connect one’s expertise to all kinds of important questions about the world – an ability which is often deliberately masked in public academic work. Which means that it can only really come out in conversation.

That’s why I want to hire scouts. I need their network and context – they know who the polymathic geniuses are, who gave a fascinating lecture at the last big conference they attended, who can just connect all kinds of interesting ideas in the field together over conversation, etc.

Gary Marcus substack – January 22, 2026

In further news vindicating neurosymbolic AI and world models, after Demis Hassabis’s strong statements yesterday, Yann LeCun, historically hostile to symbolic approaches, has just joined what sounds like a neurosymbolic AI company focused on reasoning and world models, apparently built on pretty much the same kind of blueprint as laid out in 2020.

Given how much grief LeCun has given me over the years, this is an astonishing development, and yet another sign that Silicon Valley is desperately seeking alternatives to pure LLMs — and at long last open to a reorienting around the mix of neurosymbolic AI, reasoning, and world models that scholars such as myself have long recommended.

It’s also yet more vindication for Judea Pearl, and his tireless promotion of causal reasoning. It might be a great day to reread his Book of Why, and my own Rebooting AI (with Ernest Davis), both of which anticipated aspects of the current moment years in advance.

The One Percent Rule, – January 4, 2026

The Post‑Wittgenstein Optimism

Throughout the year I found myself returning, again and again, to what might be called a post‑Wittgenstein optimism. If we can imagine a discovery and articulate it, we increasingly possess a tool capable of articulating the steps required to reach it. This is not the brittle automation of earlier decades. It is interactive, provisional, and surprisingly aligned with humane needs, especially in scientific discovery.

When Google DeepMind’s Gemini reached gold‑medal performance at the International Mathematical Olympiad, the achievement was not merely technical. It demonstrated that human curiosity, paired with machine discipline, can now move through intellectual terrain once reserved for solitary genius. We are witnessing a jagged diffusion of brilliance, a quiet removal of the ceiling that once constrained what a single mind could realistically explore.

Google CEO Sundar Pichai even coined “AJI” (Artificial Jagged Intelligence) for this phase, a precursor to AGI. This jaggedness highlights AI’s strengths in pattern recognition but weaknesses in true understanding, requiring human oversight and strategic use.

Predictions made in 2025

2026

Mark Zuckerberg: “We’re working on a number of coding agents inside Meta… I would guess that sometime in the next 12 to 18 months, we’ll reach the point where most of the code that’s going toward these efforts is written by AI. And I don’t mean autocomplete.”

Bindu Reddy: “true AGI that will automate work is at least 18 months away.”

Elon Musk: “I think we are quite close to digital superintelligence. It may happen this year. If it doesn’t happen this year, next year for sure. A digital superintelligence defined as smarter than any human at anything.”

Emad Mostaque: “For any job that you can do on the other side of a screen, an AI will probably be able to do it better, faster, and cheaper by next year.”

David Patterson: “There is zero chance we won’t reach AGI by the end of next year. My definition of AGI is the human-to-AI transition point – AI capable of doing all jobs.”

Eric Schmidt: “It’s likely in my opinion that you’re gonna see world-class mathematicians emerge in the next one year that are AI based, and world-class programmers that’re gonna appear within the next one or two years”

Julian Schrittwieser: “Models will be able to autonomously work for full days (8 working hours) by mid-2026.”

Mustafa Suleyman: “it can take actions over infinitely long time horizons… that capability alone is breathtaking… we basically have that by the end of next year.”

Vector Taelin: “AGI is coming in 2026, more likely than not”

François Chollet: “2026 [when the AI bubble bursts]? What cannot go on forever eventually stops.”

Peter Wildeford: “Currently the world doesn’t have any operational 1GW+ data centers. However, it is very likely we will see fully operational 1GW data centers before mid-2026.”

Will Brown: “registering a prediction that by this time next year, there will be at least 5 serious players in the west releasing great open models”

Davidad: “I would guess that by December 2026 the RSI loop on algorithms will probably be closed”

Teortaxes: “I predict that on Spring Festival Gala (Feb 16 2026) or ≤1 week of that we will see at least one Chinese company credibly show off with hundreds of robots.”

Ben Hoffman: “By EoY 2026 I don’t expect this to be a solved problem, though I expect people to find workarounds that involve lowered standards: https://benjaminrosshoffman.com/llms-for-language-learning/” (post describes possible uses of LLMs for language learning)

Gary Marcus: “Human domestic robots like Optimus and Figure will be all demo and very little product.”

Testingthewaters: “I believe that within 6 months [Feb 2026] this line of research [online in-sequence learning] will produce a small natural-language capable model that will perform at the level of a model like GPT-4, but with improved persistence and effectively no “context limit” since it is constantly learning and updating weights.”